AI governance matters in digital transformation because it connects innovation to accountability. It reduces risk, increases adoption and turns isolated AI efforts into scalable capability.

Strong governance combines clear ownership, practical frameworks and embedded controls across the AI lifecycle. When built into digital strategy, it supports faster delivery and better outcomes without losing trust.

What AI Governance Means In Digital Transformation?

AI governance is the set of decision rights, policies, controls and oversight that guide how AI is selected, built, deployed and monitored. It connects AI work to business strategy, risk management and operational execution.

In digital transformation, governance keeps AI aligned with process redesign, platform modernization and new operating models. It also defines how models interact with data pipelines, APIs and human workflows.

Strong governance is not only about restrictions. It creates clarity so teams can move faster with fewer rework cycles and fewer late-stage approvals.

Why AI Tools Alone Do Not Ensure Transformation Success?

AI tools can produce demos quickly, but transformation requires repeatable outcomes across teams and time. Without governance, each group chooses different tools, data definitions and evaluation methods.

That fragmentation makes scale expensive and fragile. It also increases security exposure and raises the chance of inconsistent customer experiences.

Governance fills the gap between what a tool can do and what an organization can sustain. It standardizes how value is defined, how risk is managed and how accountability is assigned.

The Business Benefits Of Strong AI Governance

AI governance improves performance by reducing uncertainty and preventing preventable failures. It helps leaders fund AI initiatives that can be adopted broadly, not only piloted locally.

It also improves trust, which directly affects usage. When teams believe outputs are reliable and traceable, adoption increases and change management becomes easier.

- Faster time to value. Shared standards for data, evaluation and deployment cut duplicated effort and shorten delivery cycles.

- Lower operational cost. Reusable components, approved tooling and consistent MLOps practices reduce maintenance and vendor sprawl.

- Higher quality decisions. Clear metrics, model documentation and monitoring improve decision accuracy and reduce noise.

- Stronger compliance posture. Audit-ready processes and controlled access support privacy, security and regulatory requirements.

- More resilient transformation. Governance makes AI reliable during reorganizations, platform migrations and leadership changes.

These benefits compound over time when governance is embedded into the operating model, not treated as a one-time review.

Key Risks Of Weak AI Governance

Weak governance creates risk that is hard to see until it becomes expensive. Many failures are not technical problems, but accountability and process problems.

Risk grows quickly when AI decisions touch customer communications, pricing, eligibility, hiring, or financial forecasting. In these domains, errors can become reputational and legal issues.

- Data misuse and privacy breaches. Uncontrolled datasets, unclear consent rules and excessive access expand exposure.

- Model drift and silent performance decay. Outputs degrade as inputs change, but no one notices without monitoring and triggers.

- Bias and unfair outcomes. Poor training data, weak testing and missing human oversight can create discriminatory impacts.

- Security weaknesses. Prompt injection, data exfiltration and insecure integrations can compromise systems.

- Unclear accountability. When ownership is vague, incidents take longer to resolve and lessons do not become standards.

Reducing these risks does not require slowing down. It requires consistent guardrails and the ability to prove they are working.

Core Elements Of An AI Governance Framework

An AI governance framework should be practical, measurable and integrated with existing governance structures. The goal is to define how decisions are made and how AI is controlled across its lifecycle.

It works best when it covers strategy, people, process and technology together. A framework that only writes policies without operational mechanisms will not survive contact with delivery teams.

- Principles and policy. Clear rules for acceptable use, data handling, privacy and model transparency.

- Use case intake and prioritization. A consistent method to score value, risk, feasibility and readiness.

- Data governance alignment. Definitions for data quality, lineage, access controls and retention requirements.

- Model risk management. Standards for validation, bias testing, explainability and approval thresholds.

- MLOps and deployment controls. Versioning, change management, rollback plans and environment separation.

- Monitoring and incident response. Metrics, alerts, owner on call and post-incident reviews that update standards.

- Documentation and audit readiness. Model cards, decision logs and evidence for internal and external review.

Once these elements are defined, the next step is to make them easy to follow in daily delivery work.

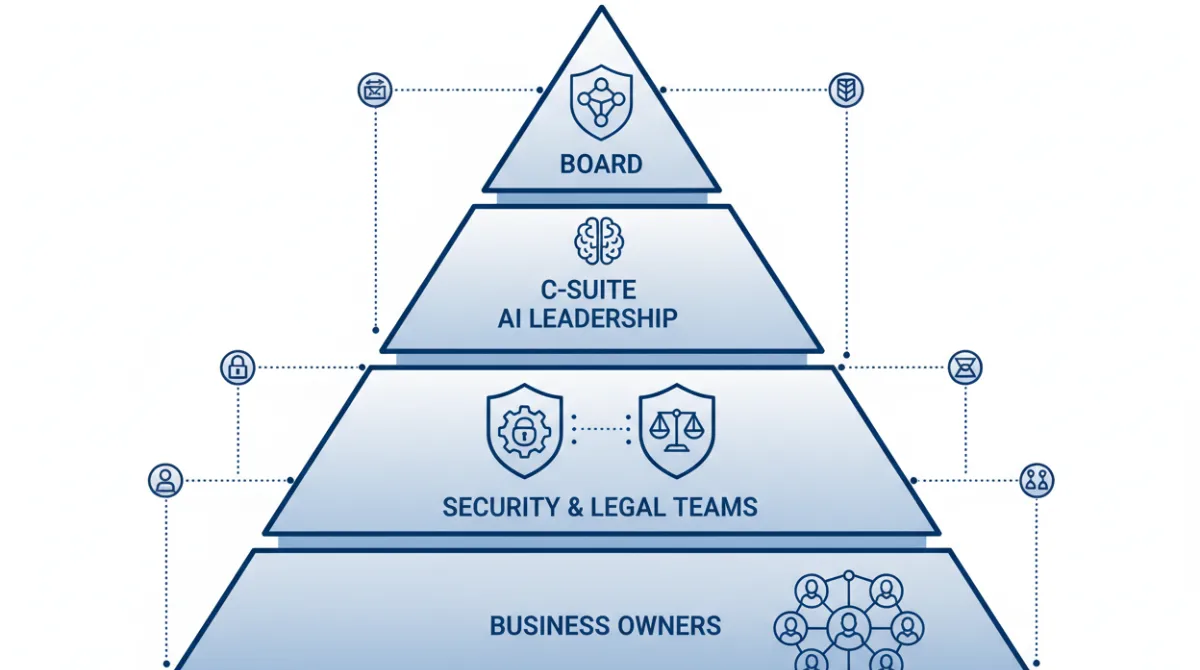

Who Should Own AI Governance In An Organization?

AI governance needs clear ownership, but it cannot live in only one department. A single-owner model often becomes either too restrictive or too disconnected from delivery realities.

A common approach is shared ownership with explicit decision rights. Executive sponsorship sets priorities, while cross-functional oversight makes standards realistic and enforceable.

- Board and executive leadership. Set risk appetite, approve high-impact policies and fund core capabilities.

- Chief Data and AI leadership. Own standards for AI delivery, platform choices and model lifecycle controls.

- Security and privacy teams. Define security requirements, access controls and privacy safeguards.

- Legal and compliance. Interpret regulations, review high-risk uses and maintain audit evidence expectations.

- Business owners. Define outcomes, approve use cases and accept accountability for operational impacts.

With ownership set, governance can move from policy documents into execution with a consistent cadence of review and improvement.

How AI Governance Helps Scale AI Responsibly?

Scaling AI responsibly means expanding adoption without expanding risk faster than control. Governance enables this by standardizing the AI lifecycle, from intake through retirement.

It also creates reusable building blocks such as approved datasets, evaluation templates and deployment pipelines. That reuse shortens delivery time while keeping quality consistent.

Responsible scale depends on performance management as much as guardrails. Governance defines what success looks like, which metrics matter and when to pause or roll back.

| Governance Area | What Good Looks Like? | How It Supports Transformation? |

|---|---|---|

| Use Case Intake | Clear value and risk scoring with documented approval | Funds the right initiatives and reduces abandoned pilots |

| Data And Access Controls | Least-privilege access, lineage and quality checks | Improves trust in analytics and automation outputs |

| Model Validation | Bias testing, accuracy targets and stress testing before release | Reduces customer harm and rework after launch |

| Monitoring And Response | Drift alerts, error budgets and clear escalation paths | Keeps AI reliable as processes and markets change |

This structure makes scaling less dependent on heroics. It makes AI delivery a repeatable part of the operating model.

Common AI Governance Mistakes In Digital Transformation

Many organizations either over-govern early or under-govern until something breaks. Both patterns create delays and reduce confidence in AI initiatives.

These mistakes often come from treating AI as a standalone project rather than a capability that touches security, data and customer experience.

- Policies without enforcement. Guidelines exist, but no tooling, checkpoints, or owners are assigned.

- One-size-fits-all approvals. Low-risk uses face the same friction as high-risk uses, which slows adoption.

- Ignoring model lifecycle costs. Budgeting stops at launch, leaving monitoring and retraining unfunded.

- Shadow AI proliferation. Teams deploy unapproved tools that bypass data controls and create inconsistent outputs.

- Missing human-in-the-loop design. Users are not trained or empowered to override, correct, and report issues.

Avoiding these pitfalls requires governance that is lightweight where risk is low and rigorous where impact is high.

Best Practices For Building AI Governance Into Digital Strategy

Governance is most effective when it is designed into digital strategy rather than added as a gate at the end. That means aligning it with portfolio management, enterprise architecture and change management.

It also means selecting controls that teams can follow naturally within existing delivery workflows. Friction should be reserved for high-risk decisions, not routine experimentation.

- Define decision rights early. Clarify who approves tools, data use and production releases so teams do not stall in ambiguity.

- Tier use cases by risk. Apply stricter validation and oversight to customer-facing and regulated domains while keeping low-risk work fast.

- Standardize evaluation. Use shared metrics for accuracy, robustness and usability so results are comparable across teams.

- Embed controls in delivery pipelines. Automate checks for security, data leakage and versioning to reduce manual reviews.

- Train roles, not only teams. Create role-based guidance for product, engineering, data science and operations.

- Measure adoption and outcomes. Track usage, incident rates and business KPIs to prove value and refine policy.

When these practices are applied consistently, governance becomes an accelerator. It supports innovation with predictable, auditable execution.

Why AI Governance Is Central To Long-Term Transformation Success?

Long-term transformation depends on repeatability, trust and the ability to evolve safely. AI governance provides the structure that keeps AI aligned with strategy as priorities shift and new capabilities appear.

It also strengthens organizational learning. Each deployment, incident and review feeds back into standards, enabling continuous improvement rather than repeated mistakes.

AI will keep changing how processes run and how decisions are made. Governance ensures that change remains intentional, responsible and durable.