The AI coding war is no longer a niche contest between research labs. It has become a high-stakes race where platform leaders, chip makers and cloud providers compete to define how software gets built and maintained.

Behind the headlines sits a practical shift. Code generation, code understanding and automated testing are moving from optional add-ons into the default workflow for many engineering teams.

What Is The AI Coding War And Why It Matters Now?

The AI coding war is the competition to produce models that can reliably write, edit, explain and verify software across real repositories. Winning is not only about impressing with demos, but about reducing engineering cycle time without increasing production risk.

It matters now because modern software development is constrained by time, staffing and complexity. A strong coding model can raise output per developer, compress release schedules and make legacy modernization less daunting.

At the same time, the stakes are higher than general chat. A single incorrect change can break builds, introduce security flaws, or create subtle data bugs that take days to detect.

Why Coding Models Have Become The Core AI Battleground?

Coding is a structured domain with fast feedback loops. A model can be evaluated by compiling code, running tests and measuring bug rates, which makes iteration more measurable than many other generative tasks.

Enterprise value is also clearer. Organizations spend heavily on software engineering, quality assurance and maintenance, so even small productivity gains can translate into major cost savings.

Another driver is lock-in. If a model becomes deeply embedded in an integrated development environment, code review system and continuous integration pipeline, it influences language choices, cloud usage and developer tool subscriptions.

Key technical capabilities separate leaders from followers.

- Repository-scale context. Understanding project structure, dependencies and internal conventions without losing accuracy.

- Strong reasoning over code. Following constraints, handling edge cases and producing changes that match intent.

- Testing awareness. Writing and updating unit tests, integration tests and snapshots in a maintainable way.

- Secure coding behavior. Avoiding vulnerable patterns and respecting least-privilege access assumptions.

These capabilities require more than larger parameters. They demand better training data, stronger evaluation and tighter feedback from real developer workflows.

How Tech Companies Are Competing To Lead AI Coding?

Competition is playing out across model training, distribution and developer experience. Some teams emphasize raw model quality, while others prioritize integration into existing ecosystems.

Three pressure points show up repeatedly in product strategy.

- Model performance in real repos. Higher pass rates on tests and fewer regressions after merges.

- Latency and cost. Fast suggestions and affordable inference so the tool stays on by default.

- Enterprise controls. Data handling, audit logs, policy enforcement and private deployment options.

This mix explains why the market is not decided by benchmarks alone. A model that is slightly weaker but easier to deploy inside a regulated environment can win significant share.

Data Advantages And Training Strategy

Code models benefit from diverse, high-quality training corpora and careful filtering. Beyond raw source code, modern systems learn from commits, pull request discussions, tests, build logs and documentation that ties intent to implementation.

Fine-tuning and preference optimization matter as much as pretraining. The best systems learn to follow repository conventions, match style guides and minimize risky edits that touch unrelated files.

Infrastructure And Distribution Power

Access to compute shapes the pace of improvement. Larger training runs, faster experimentation and broader deployment depend on GPU availability, networking and inference optimization.

Distribution matters too. When a model ships inside a popular editor, a code hosting platform, or a cloud console, it gains daily usage that generates feedback signals and sticky habits.

The Role Of Developer Tools In The AI Coding Race

Developer tools are where model capability becomes daily value. Suggestions inside the editor, context from the repository and automated workflows around testing and review determine whether developers trust the output.

Modern AI coding assistants increasingly behave like a system of tools rather than a single prompt box. They coordinate search, code navigation, refactoring, test execution and documentation updates.

Common tool patterns are becoming standard across teams.

- Inline completions. Short, fast snippets that follow local code context and typing intent.

- Chat-based refactoring. Larger changes that span files, supported by diff previews.

- Codebase Q and A. Explanations grounded in the actual repository and dependency graph.

- Automated test generation. New tests for edge cases and regression coverage tied to recent changes.

- Pull request assistance. Summaries, review suggestions and risk flags for security and performance.

As these features mature, the battleground shifts from novelty to reliability. The winners will be the tools that quietly prevent incidents and reduce rework.

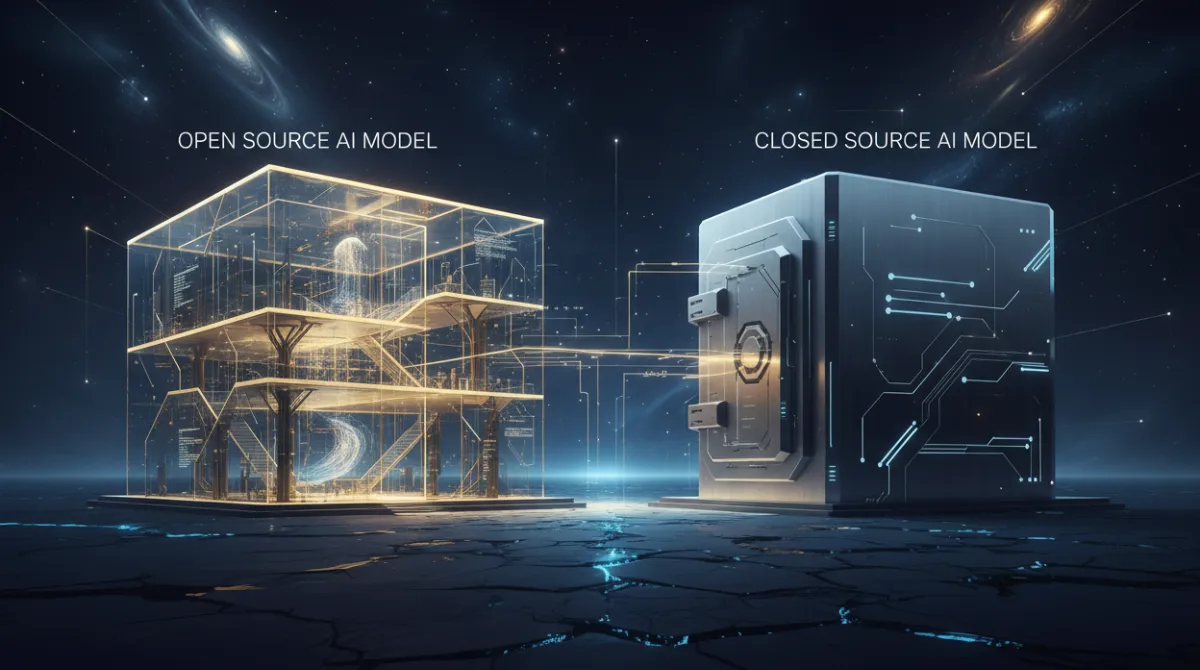

Open Source Vs Closed Models In AI Coding

Open and closed approaches each bring advantages and both can succeed depending on constraints. The trade-off is not only transparency versus performance, but also governance, customization and long-term control.

Open models can be audited, self-hosted and fine-tuned to internal code. This is attractive for organizations that need strict data boundaries, predictable pricing and the ability to adapt models to proprietary frameworks.

Closed models often deliver strong out-of-the-box performance and rapid iteration. They may ship advanced safety layers, tooling integrations and managed infrastructure that reduces operational burden.

The decision usually comes down to a few practical questions.

- Data policy. Whether source code and prompts can leave the organization.

- Customization needs. How much the model must learn internal libraries and patterns.

- Total cost. Comparing usage-based fees with self-hosting hardware and operations.

- Support and accountability. Expectations for uptime, incident response and compliance evidence.

Many teams land on a hybrid approach that uses a managed model for general work and a private model for sensitive repos.

Challenges Slowing Down AI Coding Progress

Despite rapid gains, coding models still struggle with reliability. Software engineering is full of hidden constraints and models can fail in ways that look plausible until tests or production traffic reveal problems.

Several bottlenecks show up across organizations and languages.

- Long-context limitations. Large repos require stable retrieval and summarization so the model does not miss key files.

- Evaluation gaps. Benchmarks do not fully reflect real workflows like migrations, performance tuning, or multi-service changes.

- Security risks. Vulnerable patterns, dependency confusion and unsafe code generation can slip through when teams over-trust suggestions.

- Tooling friction. If suggestions are slow or hard to review, developers disable features and revert to manual work.

- Legal and governance uncertainty. Policies on training data, licensing and code provenance vary across industries.

Progress depends on better verification. More teams are pairing generation with unit tests, static analysis, policy checks and controlled rollouts to keep quality stable.

What The AI Coding War Means For Developers And Businesses?

For developers, the immediate impact is workflow change. More time shifts toward specifying intent, reviewing diffs and improving tests, while less time goes into boilerplate and repetitive refactors.

This does not remove the need for engineering judgment. It increases the value of clear requirements, strong code review and deep understanding of system behavior under load.

For businesses, the opportunity is faster delivery with better predictability. The risk is believing productivity gains are automatic and cutting corners on testing, observability and secure software development practices.

Leaders can turn the AI coding war into an advantage by setting guardrails.

- Define acceptable use. Clarify which repos, data types and tasks are allowed for AI assistance.

- Measure outcomes. Track cycle time, defect rates, incident frequency and review load rather than counting lines of code.

- Invest in verification. Expand automated tests, linting, dependency scanning and build gates.

- Train teams. Teach prompt discipline, safe refactoring habits and how to challenge model output.

These practices make model choice less risky and keep productivity gains from turning into operational debt.

The Future Of AI Coding Models And Competition

The next phase of the AI coding war will reward systems that can plan, execute and validate multi-file changes with minimal supervision. Agentic workflows will matter, but only if they are bounded by strong permissions and reliable checks.

Expect more emphasis on end-to-end software lifecycle support. That includes requirements tracing, architecture-aware refactoring, migration planning and continuous maintenance tasks like dependency updates and test repair.

Model competition will also move closer to the platform layer. Pricing, on-device inference, private cloud deployment and governance features will influence adoption as much as raw accuracy.

Key Differentiators Likely To Matter Most

The most competitive coding models will combine strong generation with strong verification and strong integration.

| Competition Area | What Teams Will Optimize | Why It Matters |

|---|---|---|

| Context And Retrieval | Repo indexing, semantic search, stable long-context behavior | Reduces wrong edits caused by missing dependencies and conventions |

| Verification And Safety | Test execution, static analysis, policy checks, secure defaults | Improves trust and lowers incident risk |

| Toolchain Integration | IDE, CI, code review, issue tracking, docs generation | Turns model skill into daily productivity gains |

| Deployment And Governance | Private hosting, audit logs, access control, data isolation | Enables use in regulated and security-sensitive environments |

This framing helps teams evaluate vendors and internal builds based on outcomes, not marketing claims.

Conclusion

The AI coding war is reshaping how software is produced, reviewed and maintained. Better coding models can accelerate delivery and reduce toil, but only when paired with rigorous verification and clear governance.

Developers who strengthen fundamentals like testing, secure coding and system understanding will benefit most. Businesses that invest in toolchain integration and measurable quality controls will turn this competition into sustained advantage.